If time is money, then clocks are rather an important part of the whole picture. Let’s see how we handle time ticking in a computer system.

Quartz and Atomic Clocks

If you have a wristwatch or you have a laptop, you probably get your time from a quartz watch. Quartz is a material that can vibrate at a certain frequency, and that frequency can be tuned by cutting the quartz into a certain shape and size. If we add some electronics, we can then have a fairly accurate oscillator that resonates at a fairly accurate frequency. By counting those cycles we can measure elapsed time.

Although quartz watches are fairly accurate (we use them every day) they are not perfect and can produce errors (clock moving faster or slower). The reason for this can be a difference in manufacturing or a change in temperature where a significant change can cause a clock to slow down.

The rate by which a clock runs fast or slow is a called drift and it’s measured in parts per million (ppm). A clock with a drift of 1 ppm can go wrong for about 32

seconds in a year or 86 milliseconds per day. Most computer quartz clocks have a drift of about 20-30 ppm.

Atomic Clocks

If a quartz clock is not good enough for you then you can buy yourself (for a couple of thousand dollars) an atomic clock. The atomic clock is based on quantum atomic effects and is much more accurate than a quartz one. The “vibration” of an energized cesium-133 atom and the frequency of those vibrations is what is now used for ultimate timekeeping. With all of this now we have a definition of a second, and that is exactly 9,192,631,770 of those atomic “ticks”. The accuracy of an atomic

clock is a 1-second miss in 3 million years. That’s pretty accurate.

Clock Synchronization

We know now that computers will use a quartz clock to track physical time (when you see the price of an Apple computer you’d think it has an atomic clock inside). This clock has its own battery so when you turn off your computer it can keep ticking. We also know that because of its clock drift we mentioned earlier, the clock error will gradually increase.

This increasing error margin will produce what is called clock skew, which is the difference between two clocks (the timestamps they produce) at the same instance in time.

So when you take two computers from a network and read their timestamps at the same time, you’d like that those timestamps match, or that their skew is zero. But

because their quartz clocks can and will produce a wrong measure of the elapsed time (from 1st January 1970. – Unix epoch), those timestamps will not match and, depending on some conditions we mentioned earlier, that difference can be significant.

To mitigate this as much as possible we use clock synchronization. The goal is to minimize the skew, to bring it close to zero as possible (in the types of networks

we have it is not possible to reduce it to zero).

The basics of clock synchronization are this: periodically get the current time from a server that has the correct time (directly from an atomic clock or from another source that can provide it, e.g. GPS satellite) and adjusts the local time accordingly.

There are a couple of protocols for time synchronization and the most used is the Network Time Protocol (NTP). Oher one is the Precision Time Protocol or PTP.

Network Time Protocol (NTP)

The way the NTP works is that every computer (or a router or any other device) is an NTP client that will query the NTP server for the accurate time reading. The client will then adjust its own time with the one from the server.

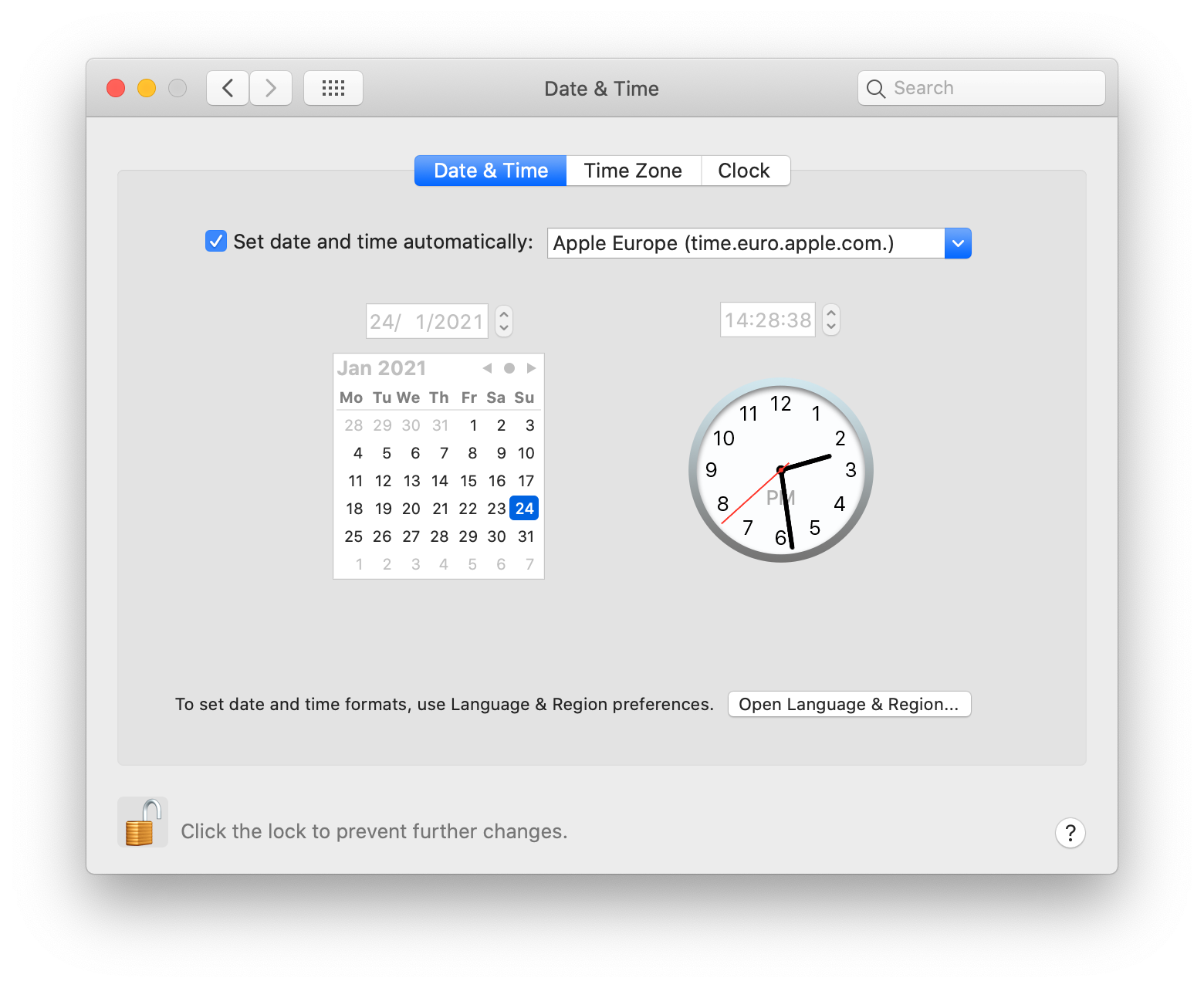

NTP is ubiquitous today and basically, every OS vendor has it deployed with their OS and they provide NTP servers that can be queried by the clients.

Here time.euro.apple.com is the NTP server which my machine, the NPT client, will ping to get the accurate time.

But even though a server has an accurate time, because of the network latencies, NTP server request processing time and the fact that a client has some offset in its local time so it can’t correctly calculate all the differences, the result of this whole synchronization algorithm on the client is the best estimate of the skew. It simply can not be reduced to zero. In good network conditions, the skew can be reduced to a couple of milliseconds but can be much worse. Wikipedia has a great page if you’re interested in more NTP details.

What is important to mention here is how a client handles different skew sizes:

- Skew < 125ms – the client will slew the clock. This means that it will gradually, over about 5 minutes, bring its clock (speed it up or slow it down) into alignment with the server time. The skew will be somewhat evenly spread over 5 minutes.

- 125ms <= skew < 1000s – the client will step the clock. It will basically do a hard reset of the local clock (move it forward or backward) to the estimated server timestamp.

- skew > 1000s – the client will panic and do nothing, which simply means it will leave the problem for an admin to handle.

Wall clocks and Monotonic clocks

If you want to read the current time and day then you’ll get your time from a time-of-day clock or a wall clock. In Java, you would call System.currentTimeMillis() , and generally, on Linux, you can call clock_gettime(CLOCK_REALTIME). The wall clock is synchronized and is subject to NTP corrections we mentioned earlier, mainly stepping.

Now you can probably see why this clock is not good for measuring stuff, something like this:

long startTime = System.currentTimeMillis(); doStuff(); //while executing, NTP step can happen long endTime = System.currentTimeMillis(); long elapsed = endTime - startTime; //elapsed time can be negative

What can happen here is while doStuff is executing a clock synchronization can happen, the NTP step, which will reset the clock. And now when you call System.currentTimeMilis() again to get the endTime and then you subtract endTime and startTime the result can be negative or, much larger than it should be (depending on if the local clock was moving slower or faster). Besides NPT stepping, a wall clock is subject to leap second adjustment.

This is the reason why using the wall clock is not good for measuring and exactly this is why we have monotonic clocks.

A monotonic clock is a clock in which NTP stepping will not have any effect. Slewing can still happen, which will improve the accuracy of the clock, but no sudden jumps will occur. While the wall clock is measuring time from, say the Unix epoch, a monotonic clock will start counting from some arbitrary moment, e.g. when the machine booted up. The main purpose of it is to provide near-constant ticks so when you want to measure something, no sudden jumps will happen.

To read a monotonic clock in Java you can call System.nanoTime() and on Linux it is clock_gettime(CLOCK_MONOTONIC). Now we can measure doStuff() properly:

long startTime = System.nanoTime(); doStuff(); long endTime = System.nanoTime(); long elapsed = endTime - startTime;

So the monotonic clock has no meaning other than measuring elapsed time on a single node, you can’t get the current date and time by calling System.nanoTime().

01-24-2021 - 07:47:00.728 //System.currentTimeMillis() 06-08-1972 - 04:49:37.117 //System.nanoTime()

But when you need to share and compare your timestamp with different nodes in the system, you’ll use the wall clock. For example, your machine has to compare a TLS certificate validation date with the server, and in this case, a monotonic clock does not make any sense.

Many developers think the main difference between these two calls is that nanoTime return nanoseconds so it’s more precise, but that is just a superficial difference, the real difference is what we described above.

Logical Clocks

Everything we mentioned above is about physical clocks and even with the clock synchronization protocols we have, in a distributed system that is not enough because even a microseconds skew can break the algorithms in place. If you want to ensure some kind of order of events happening, you can’t use a physical clock (imagine a distributed database or a cache handling thousands of reads and writes per second). That is why in a distributed systems we use something called a logical clock.

Logical clocks, like monotonic clocks, don’t mean anything in the sense of reading the current date and time. While the physical clock counts the number of seconds elapsed, a logical clock counts the number of events accrued. That way a wanted events order and casualty can be maintained (we can know if event A happened before event B) Two main logical clock algorithms are the Lamport clock and vector clock.

There is of course a lot more to logical clocks and it is a major subject in distributed systems (I plan to write something about them), but here I just wanted to mention them for the completeness of the story.

Summary

Because quartz crystal can’t get us constant vibration frequency and because of the fact that we cant stick a multi-thousand dollar atomic clock on every motherboard, we have to make compromises. We have protocols, like NTP, to help us sync our local clocks with the much more accurate atomic ones, but with the network types we have, our clocks simply can’t be perfectly in sync. These are our wall-clocks and we saw that we can’t relly on them to measure things in our code. For that purpose we can use a monotnic clock whose only job is to provide near-constant ticks and spare us of any sudden NTP stepping corrections. We also saw that in a distributed system no physical clock can help us and for that reason, there are logical clocks we can utilize.